Kubernetes 1.36: What's New — GA Features, Removals & Upgrade Guide

While Kubernetes started its journey as an orchestration platform for web services, it has since transformed into something much broader. With the rise of AI, it is no longer just about orchestration; it is becoming a factory where AI workloads can live and run alongside traditional services. Each release nudges it further in that direction.

1.36 is no exception. Technical debt continues to be paid off, security gets tightened, and best practices are baked in more deeply. And with GPU-related updates in the mix, this release actually marks a meaningful leap for AI-specific workloads, even if it does not shout about it.

What's Inside?

Some of these have been on the wishlist for a while. Webhook servers that need babysitting, gitRepo volumes that probably shouldn't have existed in the first place, Jobs you couldn't touch while suspended.1.36 takes care of a good chunk of that list. Here's what's in:

1- Security & Policy: MutatingAdmissionPolicy targets GA, fine-grained kubelet API authorization reaches stable

2- Storage: Volume Group Snapshot targets GA, SELinux mount relabeling targets GA, gitRepo volume permanently disabled

3- Workload Management: Mutable scheduling directives and resource requests for suspended Jobs are now enabled by default

4- Developer Experience: kubectl kuberc promoted to Beta, ARCH column added to node output

5- Other Notable Changes: kubectl diff --show-secret, PreBind parallelization, audit log rotation behavior change

6- Removals: gitRepo volume, flex-volume support in kubeadm, Portworx in-tree driver

Who's Affected and How

This release quietly improves the day-to-day for most teams. Here's where you'll feel it:

Platform / Infra teams: If you've been maintaining a webhook server just for mutations, that overhead is gone. MutatingAdmissionPolicy going GA means you can define the same logic as a native Kubernetes object and move on. Also worth a look before upgrading: IP/CIDR validation is now a hard rejection, not just a warning.

ML / AI teams: Suspending a Job mid-pipeline and resuming it with updated resource requests or node selectors is now thef default behavior. If you've been working around this with custom controllers or killing and restarting jobs entirely, that workaround can go.

Security teams: Fine-grained kubelet API authorization reaching stable means a compromised node credential no longer implies full kubelet access. Combined with the permanent removal of gitRepo volumes, the cluster's attack surface got meaningfully smaller in this release.

Developer / DevOps teams: kubectl kuberc hitting Beta means your aliases and preferences finally live separately from kubeconfig, no env var needed. Small thing, but it adds up over a long day.

Security & Policy

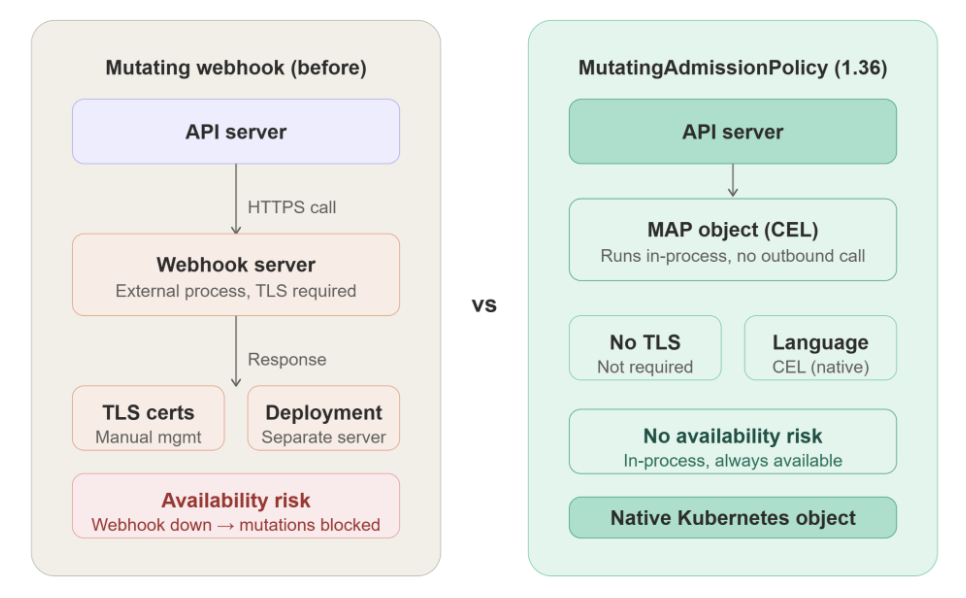

The most significant security change in this release comes in the admission layer. MutatingAdmissionPolicy targets GA and is now enabled by default. What this really means is that the overhead of spinning up and maintaining a separate webhook server is gone. Instead of dealing with TLS certificates, server deployments, and availability concerns, you define mutations as native Kubernetes objects using CEL (Common Expression Language) expressions.

This is a more Kubernetes-native approach, and it keeps things where they belong. Management, networking, and security all stay inside the cluster. No outbound calls, no external dependencies, no single point of failure when a webhook goes down.

Going a bit deeper: every request hitting the API Server passes through a fixed pipeline: authentication → authorization → mutating admission → schema validation → validating admission. Mutating admission is the only point where a request can still be modified before being written to etcd. With MAP, the CEL expression runs inside the API Server's own process, which is exactly where it should be.

apiVersion: admissionregistration.k8s.io/v1

kind: MutatingAdmissionPolicy

metadata:

name: add-default-label

spec:

matchConstraints:

resourceRules:

- apiGroups: ["apps"]

apiVersions: ["v1"]

operations: ["CREATE"]

resources: ["deployments"]

mutations:

- patchType: ApplyConfiguration

applyConfiguration:

expression: >

Object{metadata: Object.metadata{

labels: {"managed-by": "platform-team"}

}}

Note: If you are using OPA Gatekeeper or a similar tool purely for mutation (not validation), now is a good time to evaluate whether this native feature covers your use case. Keep in mind that MAP is not a full replacement for every scenario just yet:

- CEL expressions can't make external calls

- Complex multi-step mutation logic gets unwieldy fast

- Doesn't cover everything Gatekeeper does on the validation side

Another important step on the security side: fine-grained kubelet API authorization reaches stability in this release. It allows more precise control over which clients can call which kubelet endpoints. Even if a node-level credential is compromised, the blast radius can be meaningfully contained.

Storage

Alongside the security changes, 1.36 is also a busy release for storage. Several features from SIG-Storage are targeting GA at the same time.

Volume Group Snapshot targets GA. Consider a disaster scenario, such as a Dubai AWS data center going down. To minimize both RPO and RTO, you need a recovery point that is actually consistent. When disks are snapshotted separately, there is an inevitable time gap between them, and that gap can corrupt your recovery state. Volume Group Snapshot eliminates that gap by locking all disks at the same instant through an atomic freeze request to the storage backend via the CSI driver. No application-level coordination like pg_start_backup() is needed; no code changes are required. A solid step forward for disaster recovery.

apiVersion: groupsnapshot.storage.k8s.io/v1beta1

kind: VolumeGroupSnapshot

metadata:

name: database-group-snapshot

spec:

volumeGroupSnapshotClassName: csi-groupsnapshot-class

source:

selector:

matchLabels:

app: postgres

SELinux Mount Relabeling also targets GA (via the SELinuxChangePolicy feature gate). Even without having hit this issue firsthand, the I/O efficiency gains here are clear. Previously, the kubelet updated the security.selinux extended attribute on each inode one by one on a 100GB disk, which means millions of inodes and several minutes of container startup delay. For agents like Instana that auto-instrument applications, that kind of overhead adds up fast. The new approach assigns a virtual label to the entire mount point via mount -o context=... not a single inode is touched, and the process completes in milliseconds regardless of disk size.

CSIServiceAccountTokenSecrets also targets GA. This allows CSI drivers to receive service account tokens through the secrets field in the volume context, giving drivers that integrate with external identity systems more flexibility.

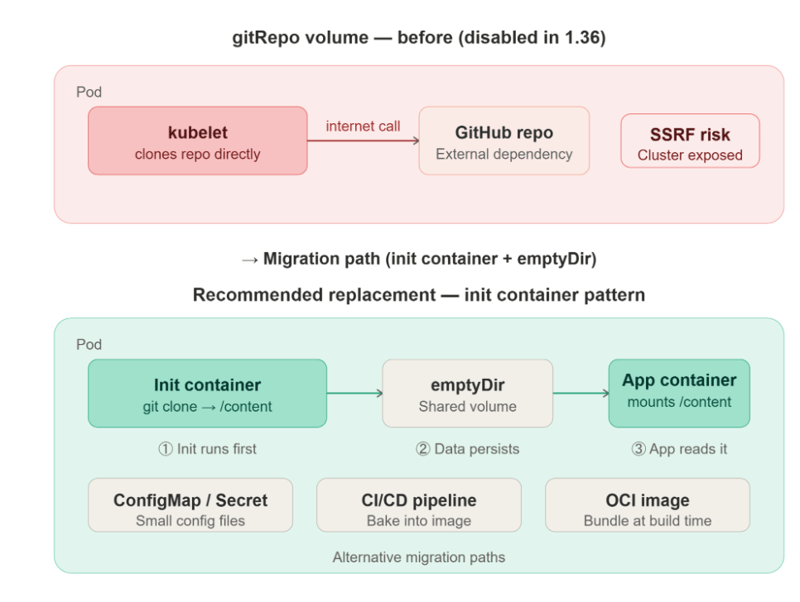

gitRepo volume has been permanently disabled. Honestly, this is one of the better removals in recent memory. Everyone gets to do their own job now. The kubelet's job is not to reach out to the internet and clone repositories. Beyond that, a compromise in a Git repository could have put the entire cluster at risk. Replacing an insecure convenience feature with a more secure and equally practical alternative is a clear win for security. The kubelet stays in its lane, and credentials are managed properly through Secrets.

💡 Note: A few things worth checking before you act on any of these storage changes:

- SELinux Mount Relabeling only applies to CSI-backed volumes; in-tree plugins still use the old behavior. It doesn't enable itself after upgrade, you need to configure SELinuxChangePolicy explicitly. If your driver doesn't support it, it silently falls back so verify your driver's changelog first.

- CSIServiceAccountTokenSecrets support depends entirely on your CSI driver. Not all drivers have implemented it yet, and token rotation handling varies, check before relying on it in production.

Workload Management

Beyond the storage and security changes, 1.36 brings a meaningful quality-of-life improvement for batch workload management. The MutablePodResourcesForSuspendedJobs and MutableSchedulingDirectivesForSuspendedJobs feature gates are now enabled by default.

Being able to suspend a Job and resume it with updated resources is particularly valuable for workloads that depend on each other. Previously, killing a Job mid-flight and restarting it from scratch would lock up the entire pipeline. Now there is much more control you can adjust node selectors, tolerations, and affinity rules while the Job is suspended, then pick up right where things left off. For AI/ML batch workflows, especially, this kind of flexibility matters.

# Suspend the job

kubectl patch job ml-training-job -p '{"spec":{"suspend":true}}'

# Update the scheduling directive while suspended

kubectl patch job ml-training-job -p '{

"spec": {

"template": {

"spec": {

"nodeSelector": {"node-type": "gpu"}

}

}

}

}'

# Resume the job

kubectl patch job ml-training-job -p '{"spec":{"suspend":false}}'

The technical reasoning behind the original constraint makes sense: the Job controller uses the spec as a template when creating pods. If the spec changes mid-run, the controller loses track of which pods belong to which version. With a suspended Job, there are no pods; the controller starts fresh, so the constraint can safely be lifted.

Developer Experience

Though it stays in the shadow of bigger changes, 1.36 also makes daily kubectl usage a bit more comfortable.

kubectl kuberc is promoted to Beta. The .kuberc file lets you store kubectl preferences and aliases separately from your kubeconfig. With the Beta promotion, you no longer need to set the KUBECTL_KUBERC=true environment variable manually.

preferences:

colors: true

aliases:

list-pods:

command: get pods

flags:

output: wide

kubectl get node -o wide

# NAME STATUS ROLES AGE VERSION INTERNAL-IP OS-IMAGE KERNEL-VERSION ARCH

# node-1 Ready worker 10d v1.36.0 10.0.0.1 Ubuntu 5.15.0 amd64

# node-arm Ready worker 5d v1.36.0 10.0.0.2 Ubuntu 5.15.0 arm64

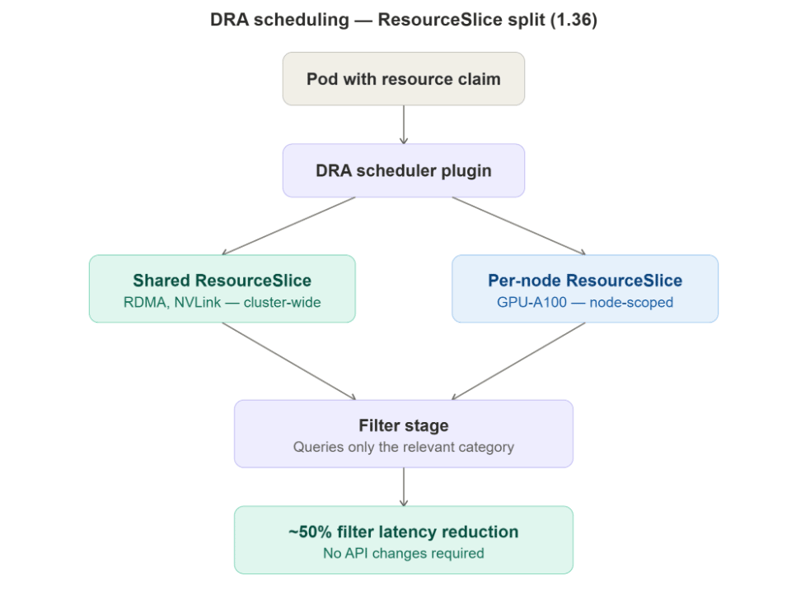

DRA scheduling performance improved by approximately 50%. The Dynamic Resource Allocation scheduler plugin was restructured to split ResourceSlice entries into shared and per-node categories. This produces a noticeable reduction in Filter stage latency in large clusters. No API changes are required.

Other Notable Changes

kubectl diff can now show secret values. The new --show-secret flag lets you view secret contents unmasked during a manifest diff. The default behavior is unchanged secrets remain hidden unless the flag is explicitly passed. It closes a gap that has been a minor annoyance in debugging workflows.

kubectl diff -f deployment.yaml --show-secret

PreBind plugins can now run in parallel. PreBind plugins, which run just before the binding phase in the scheduler, have always executed sequentially. In 1.36, plugins can opt into parallel execution by returning AllowParallel: true. This produces a measurable improvement in binding latency for large clusters under heavy scheduling load. A note for plugin authors: the PreBindPreFlight method now needs to return PreBindPreFlightResult instead of nil.

Audit log rotation behavior has changed. Setting --audit-log-maxsize=0 now fully disables log rotation in previous versions, the same flag would silently fall back to the default 100MB limit. Additionally, --audit-log-maxage now defaults to 366 days and --audit-log-maxbackup defaults to 100. It is worth reviewing your current audit log configuration to make sure these new defaults align with your expectations.

Deprecations & What to Watch For

The removals in this release deserve just as much attention as the new features. The following changes need to be verified before upgrading.

gitRepo volume is disabled. Covered in detail in the Storage section there is no way to re-enable it. Audit your workloads before upgrading.

Flex-volume support has been removed from kubeadm. If you rely on flex-volume plugins and use kubeadm, you will need to build a custom KCM image and configure --flex-volume-plugin-dir manually before upgrading. SIG-Storage has been recommending migration away from flex-volumes since 1.22.

The Portworx in-tree volume driver has been removed. If you use Portworx, make sure you are already on the CSI driver before upgrading.

IP/CIDR validation has been tightened (Beta). Non-canonical IP formats like 010.000.001.005 and ambiguous CIDRs like 192.168.1.5/24 are no longer accepted in Kubernetes objects. This change has been emitting warnings for several releases in 1.36, it becomes a hard rejection. If any tooling in your pipeline generates IP or CIDR values programmatically, now is the time to audit it.

Conclusion

With 1.36, Kubernetes compatibility for high-performance, AI-driven web services continues to grow. Security is tightened, Kubernetes-native solutions keep replacing external dependencies, and the platform inches closer to being a first-class home for AI workloads. It is not a flashy release but it is a focused one, and that is exactly what production-grade infrastructure needs.

Before upgrading, two things need to be verified: whether any workloads still use gitRepo volumes, and whether any tooling generates non-canonical IP or CIDR formats. Once those are cleared, 1.36 is a straightforward and worthwhile upgrade.

The official release notes will be available when 1.36.0 ships on April 22, 2026.

Sait Bütün

Platform Engineer @kloia